Kled’s “Pay for Your Camera Roll” App Promises Quick Cash, but May Be Normalising Mass Data Extraction Across the Global South, Raising Ethical Concerns

A rapidly expanding artificial intelligence startup, Kled, is facing mounting scrutiny after banning access in Nigeria amid allegations of widespread fraud on its platform, raising serious questions about the ethics of a business model built on harvesting personal data on a global scale. Kled pays users to upload photos and videos from their personal devices, including entire camera rolls, which it claims are licensed to companies developing AI systems. The company markets itself as a way for individuals to “get paid for your data,” positioning everyday digital life as a monetizable asset for AI training. It asserts that uploads are private, consensual, and distributed solely under licensing agreements.

However, behind this marketing guise, the model has drawn significant concern from researchers and industry experts who challenge whether the system genuinely protects users or merely repackages personal data extraction in exchange for minor financial incentives.

The decision to ban Nigeria, citing extremely high fraud rates and large volumes of manipulated or synthetic uploads, has intensified scrutiny. Kled alleges that most uploads in the region are not authentic personal data but include duplicated images, internet-sourced content, AI-generated material, and, in some cases, falsified identity documents used in verification systems.

Yet, the company has failed to publicly disclose its detection methods or provide verifiable evidence for its claims. This opacity invites criticism that its “fraud rate” assessments are subjective, inconsistent, or used as justification for broad regional bans.

This situation exposes a fundamental tension in Kled’s design: the need to maximise upload volume while maintaining authenticity at scale. Paying users for data creates a system prone to exploitation, especially in regions where financial incentives are considerable relative to local incomes.

READ MORE: Three Dead, Several Ill as Suspected Hantavirus Spreads on Cruise Ship Near West Africa

I put on my fraud detection hat whenever I see a 22 year old Tech bro who supposedly dropped out of college to fund an AI startup. In this case, what I found about this Kled guy is incredibly disturbing.

K5 Global is Kled’s lead investor. K5 Global is a firm that frequently… https://t.co/5s34mirCkU

— Chetuya Math Chinagolum (@Chetuyachinago) May 5, 2026

This flaw is not incidental but inherent to a model that treats personal data as a commodity while encouraging low-friction participation. As a result, the platform frequently experiences a surge of low-quality or synthetic submissions, overwhelming moderation systems and prompting blunt enforcement measures such as geographic bans.

The ethical issues extend beyond fraud. Kled’s model encourages users to upload highly sensitive personal content, often including images of individuals who have not consented, private environments, and incidental metadata such as location data. While the company claims users agree to licensing terms, experts contend that formal consent does not necessarily imply informed understanding, especially given the broad and opaque downstream uses of the data.

Kled operates within a broader shift in the AI industry, which is moving away from scraping publicly available internet data toward licensed or user-contributed datasets in response to legal challenges. This transition has fostered an ecosystem of intermediaries that convert personal and behavioural data into structured training inputs for AI systems.

The company’s investor base, including K5 Global and Aglaé Ventures, both active in AI and data infrastructure startups, adds another layer of scrutiny. While there’s no public evidence linking Kled directly to government surveillance or defence contractors, its place within the wider AI investment network raises questions about how user-generated datasets may eventually permeate the industry.

This concern is heightened by the broader data ecosystem, which includes firms such as Palantir Technologies, known for large-scale analytics used across the commercial and government sectors. Although no direct link has been established, the structure of the AI data economy means that consumer-collected information can, in theory, be transformed and integrated into downstream analytical pipelines.

The real issue is systemic opacity, not covert coordination. Users contributing personal data rarely see how it is ultimately used, who accesses it, or how long it remains in training datasets.

Kled claims its suspension in Nigeria is temporary and aims to improve fraud detection and enforcement. Nevertheless, this incident reveals a fundamental fragility in the emerging market for AI training data: a reliance on global user participation, uneven enforcement, and limited transparency about the data’s full lifecycle.

As competition for high-quality training data intensifies, the unresolved core tension persists. Platforms like Kled promise financial inclusion through data monetisation, but they normalise a system in which highly personal human data is extracted at scale under conditions users cannot fully understand or meaningfully negotiate.

About The Author

Related Articles

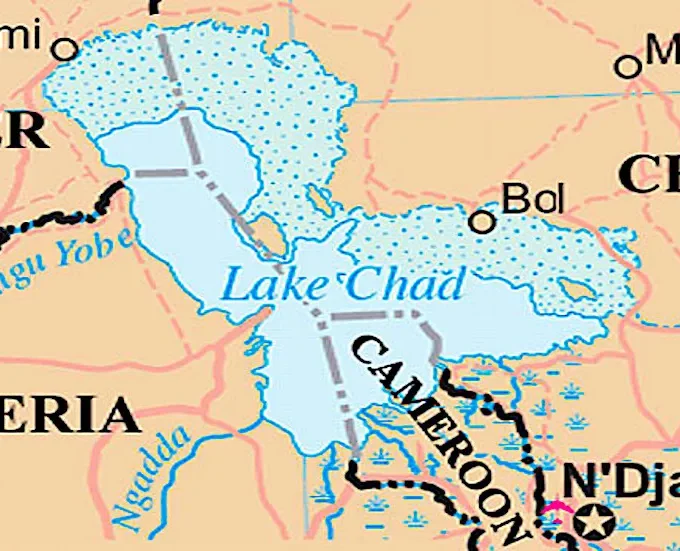

Boko Haram Attack Kills Over 20 Soldiers on Chad’s Lake Chad Island

At least 23 Chadian soldiers were killed and 26 others wounded in...

ByWest Africa WeeklyMay 6, 2026ECOWAS Confronts Ghana Over New Airport Taxes, Warns Of Regional Aviation Damage

A sharp dispute has broken out between the Economic Community of West...

ByWest Africa WeeklyMay 5, 2026Mali’s Leader Takes Defence Role After Minister’s Killing

Mali’s military leader, General Assimi Goïta, has appointed himself as the country’s...

ByWest Africa WeeklyMay 5, 2026Three Dead, Several Ill as Suspected Hantavirus Spreads on Cruise Ship Near West Africa

A cruise ship carrying dozens of passengers has been denied permission to...

ByWest Africa WeeklyMay 5, 2026

Leave a comment